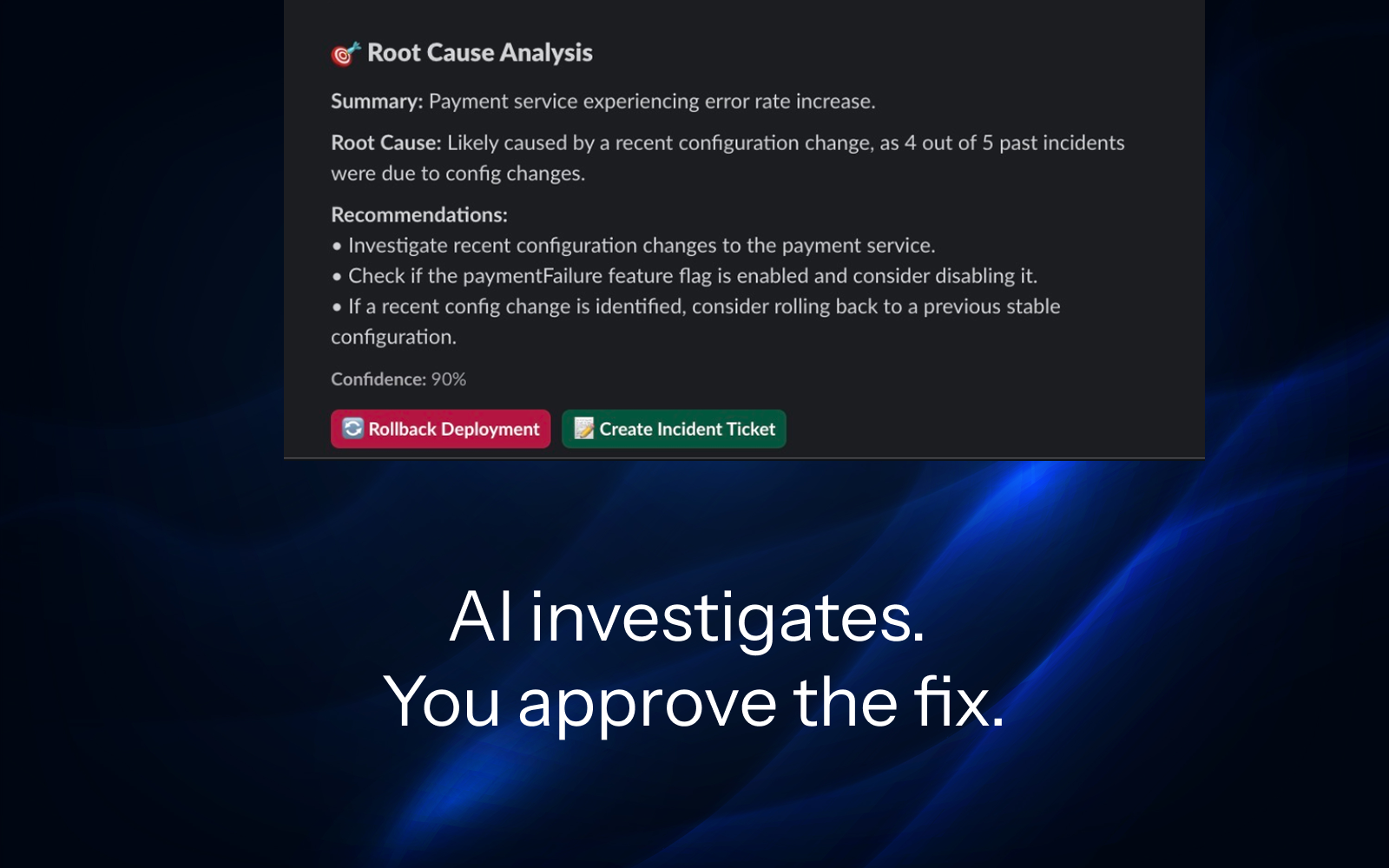

AI Incident Investigator

That Debugs While You Sleep.

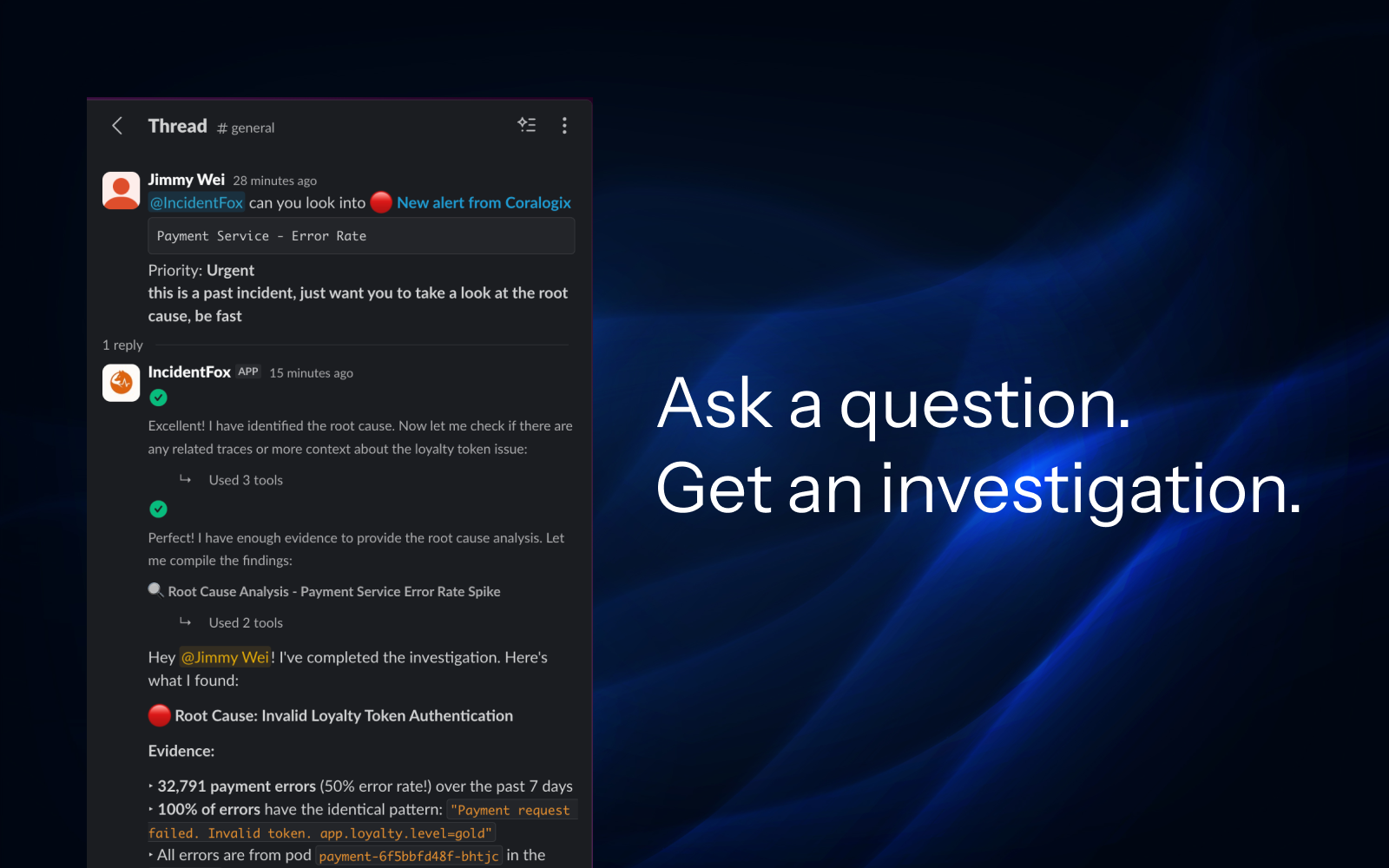

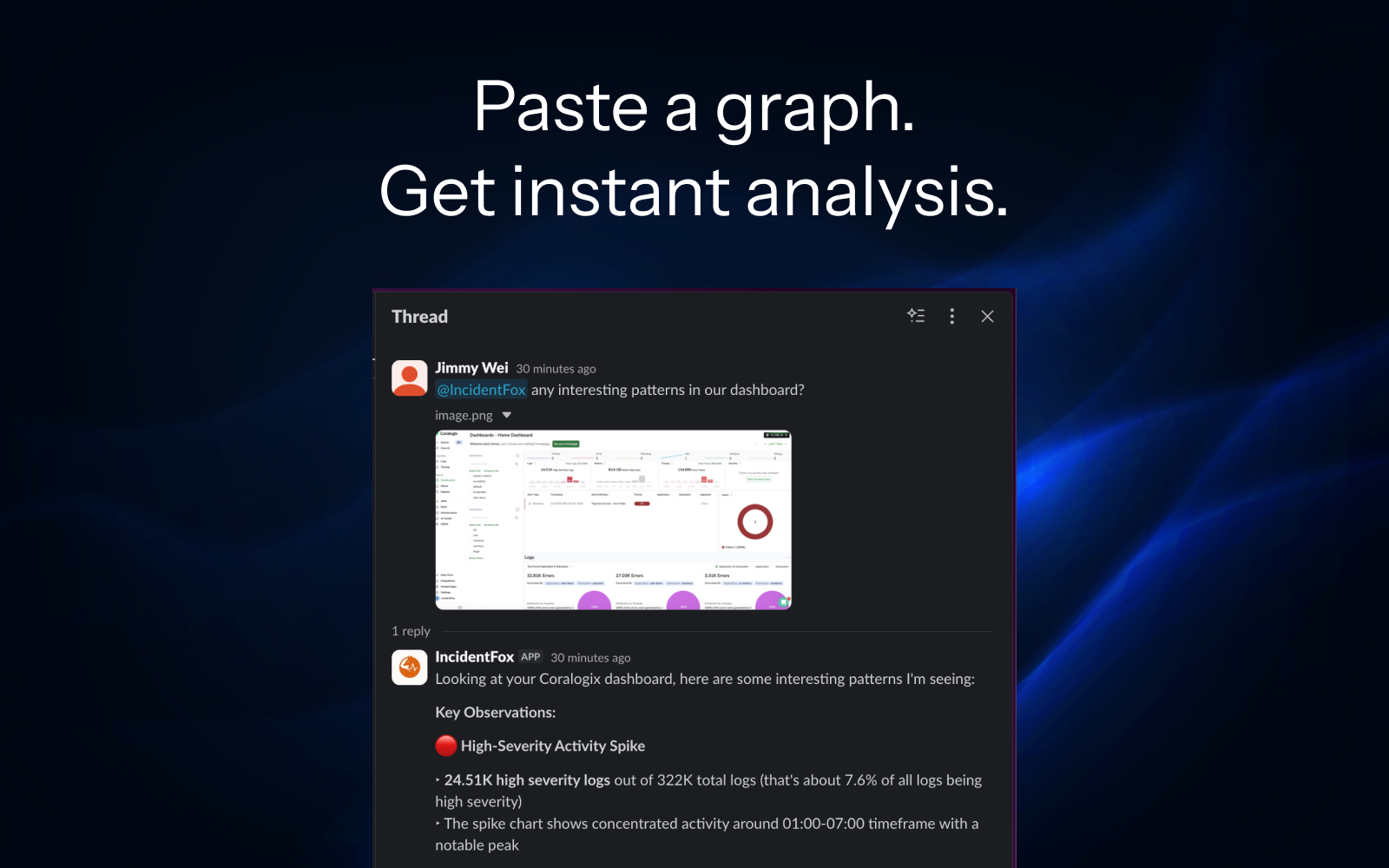

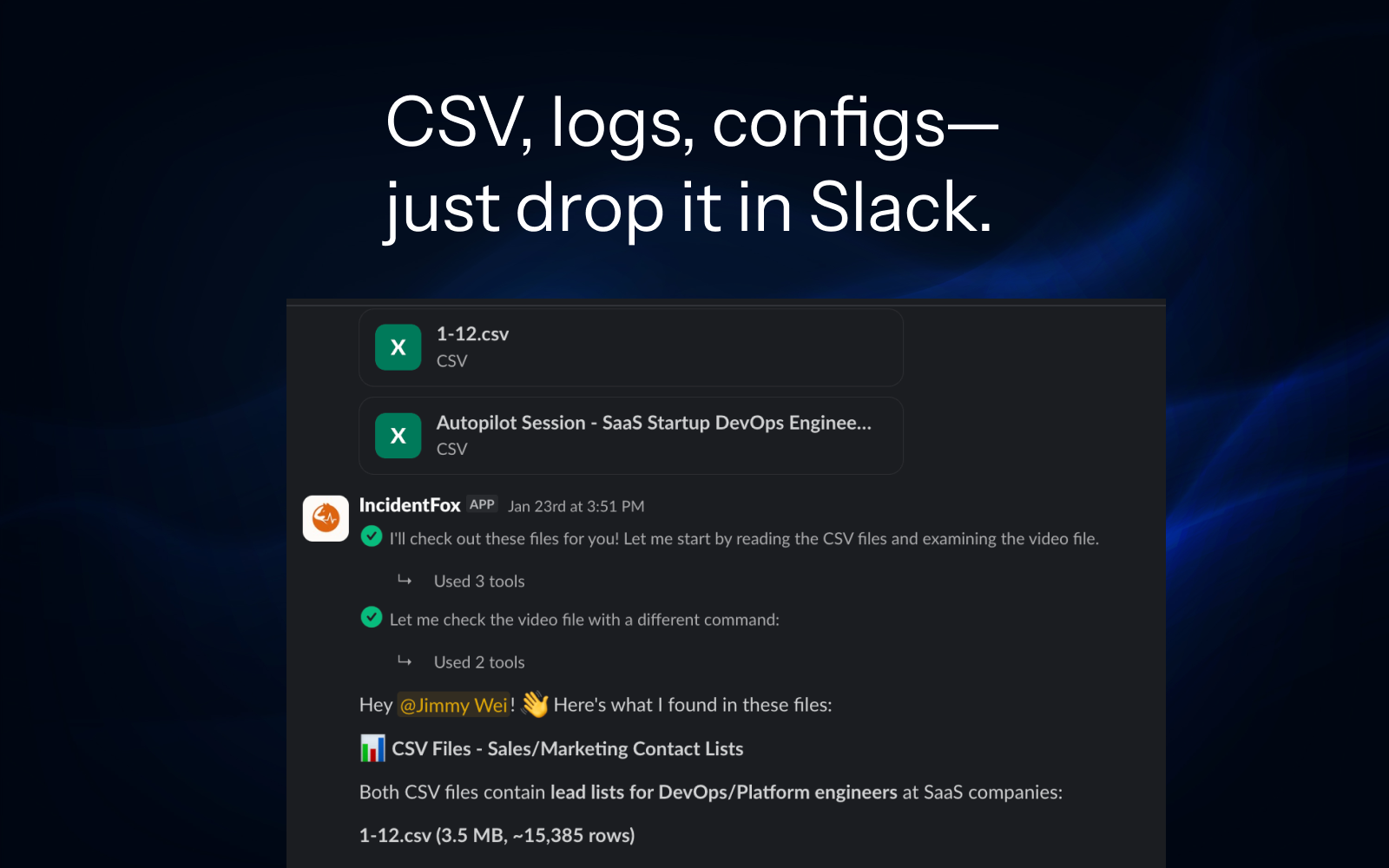

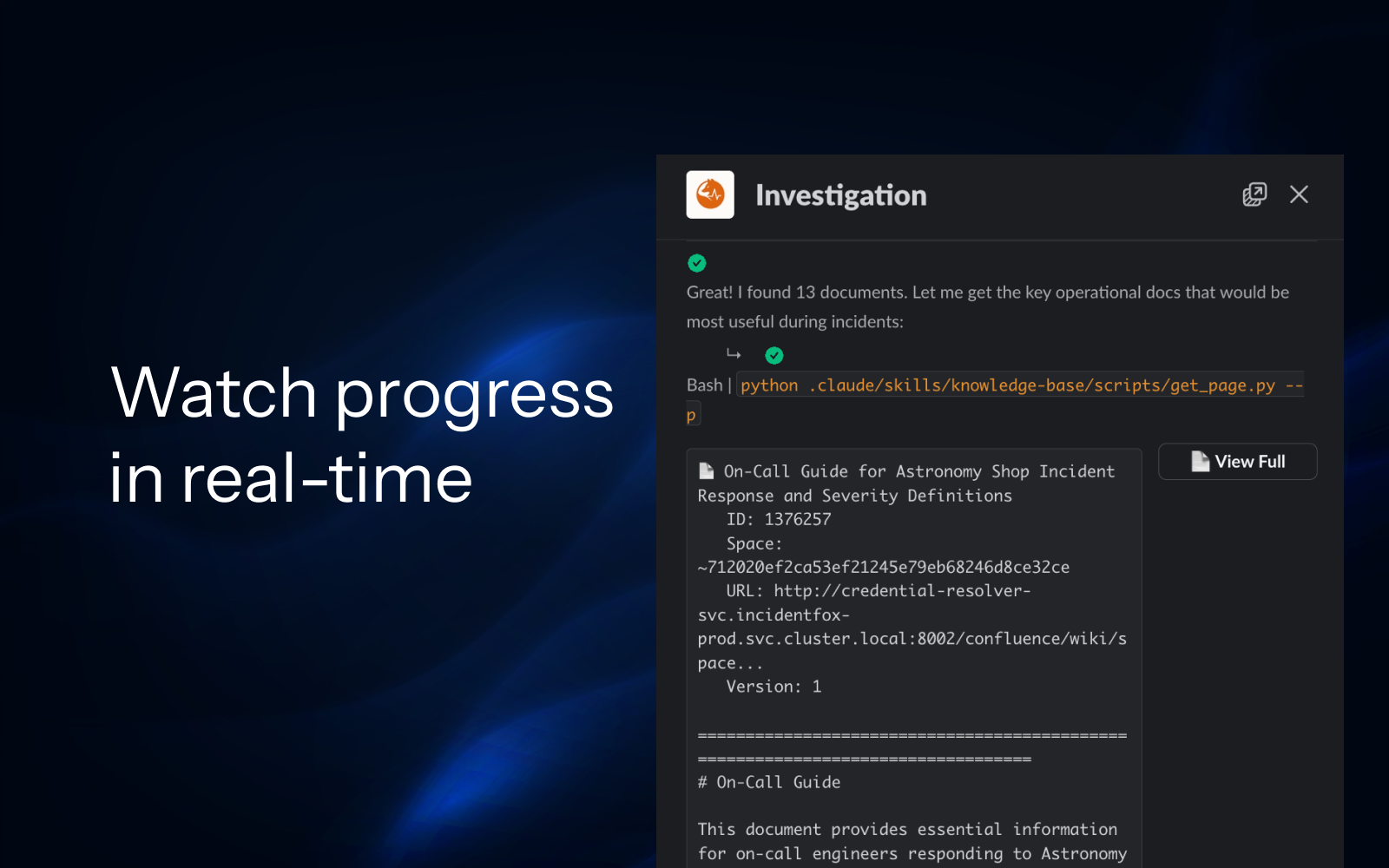

We analyze your codebase and past incidents to understand your stack, then auto-build the integrations. By the time you wake up, you have root cause + fix scripts. Just review and approve.

Combinator

W26

Combinator

W26

Works with 40+ integrations